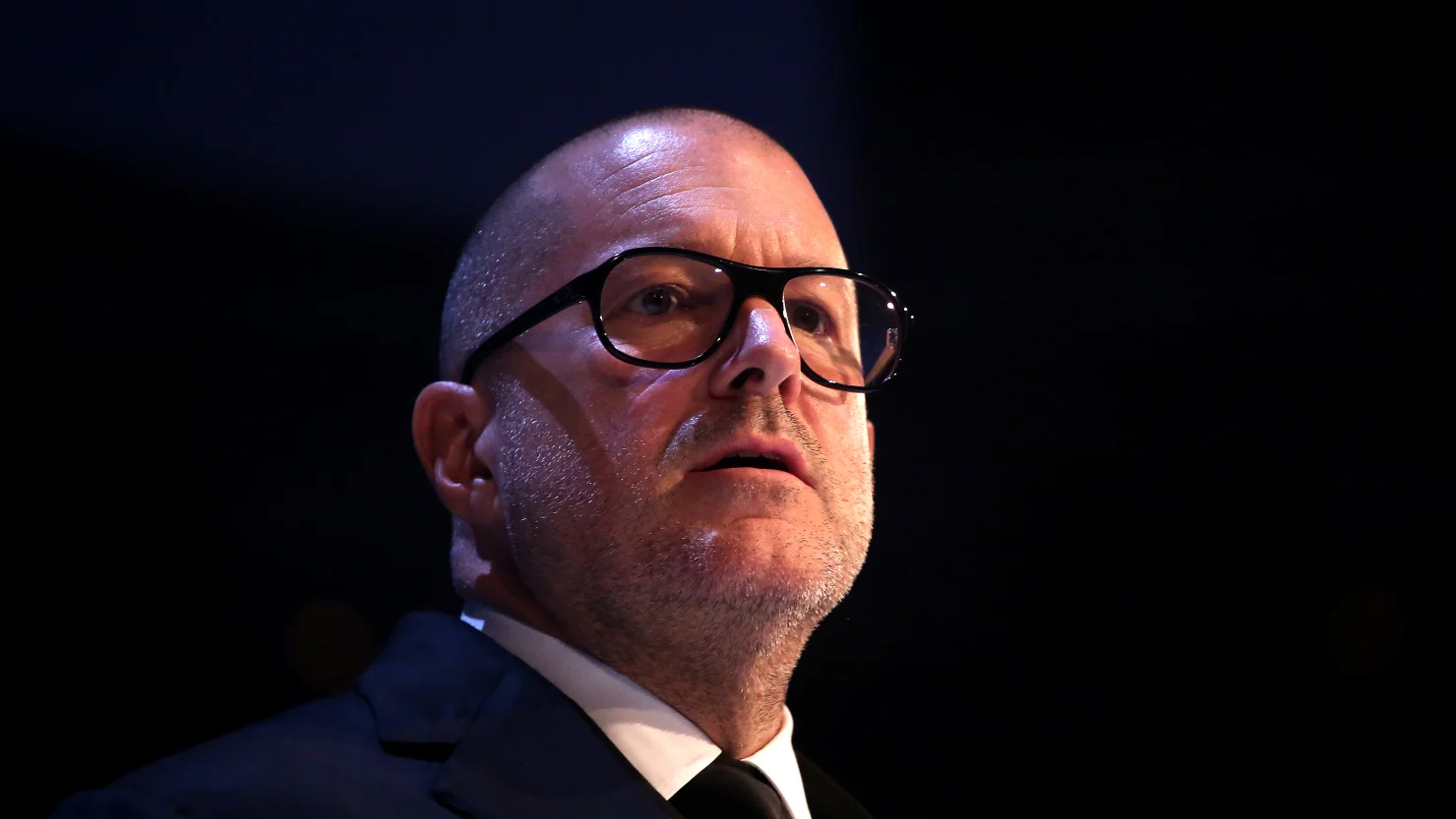

OpenAI CEO Sam Altman called Jony Ive “the greatest designer in the world” on Wednesday after announcing his company’s plan to buy Ive’s artificial intelligence device startup io, in a deal worth $6.4 billion.

The deal signals OpenAI’s intention to build consumer devices, likely meant to get more people using its AI services regularly. Altman and Ive have stayed mum on the specific products they’re planning to roll out, and when, but their partnership shows that OpenAI is taking a big swing: Steve Jobs once described Ive as his “spiritual partner at Apple” and a “wickedly intelligent person in all ways,” according to Walter Isaacson’s 2011 biography of the Apple co-founder.

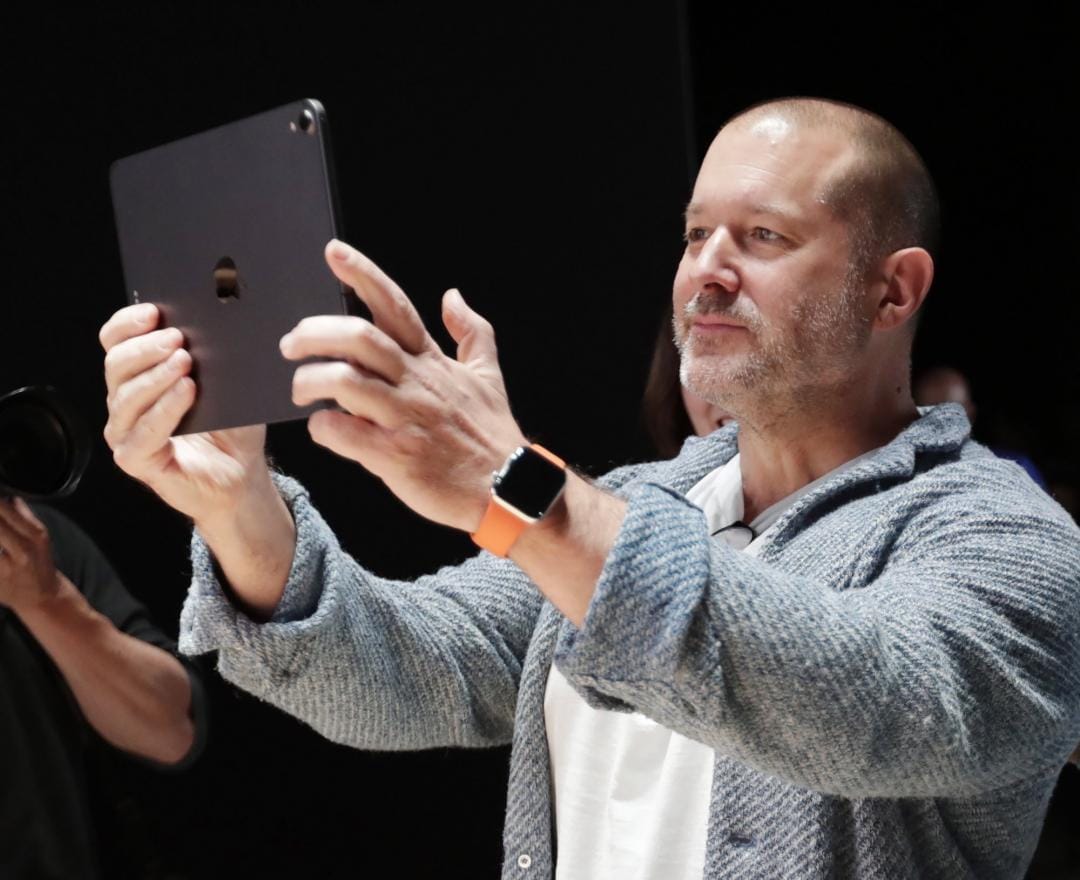

Ive, 58, served as Apple’s chief design officer until 2019 and spent nearly three decades designing some of the tech giant’s most iconic pieces of hardware, from the iMac and MacBook to the iPhone, iPod and iPad. Born in London, he joined Apple in 1992, five years before Jobs returned as CEO to the company he co-founded.

Jobs quickly found a kindred spirit in Ive, later telling Isaacson that the pair typically conceived most of Apple’s new products together, before pulling in other collaborators: ”[Ive] understands business concepts, marketing concepts … He gets the big picture as well as the most infinitesimal details about each product.”

When Jobs died in 2011, Ive delivered his eulogy, calling his former boss his “closest and most loyal friend.”

Ive’s first collaboration with Jobs came on the colorful line of iMac personal computers released in 1998, for which the designer created striking features like a translucent plastic case and a handle on the back of the computer. Later, Ive’s focus shifted toward making products like the iPod and iPhone sleek, stylish and easy to use.

Ive also led the design of the Apple Watch and Apple’s AirPod earbuds. “The difference that Jony has made, not only at Apple but in the world, is huge,” Jobs told Isaacson.

When Ive left Apple in 2019 to launch his own independent design firm, LoveFrom, analysts at Deutsche Bank told CNBC News that the tech company was losing “one of [its] most important people.”

What could Ive design for OpenAI?

Altman is tasking Ive with trying to capture some of Apple’s magic, writing in a statement that Ive “will assume deep design and creative responsibilities across OpenAI and io.” The pair first agreed to work together on building a piece of AI-powered hardware two years ago, The New York Times reported in September.

It’s unclear exactly what types of products will result from the partnership. Their vision is for “a product that uses AI to create a computing experience that is less socially disruptive than the iPhone,” the Times wrote. They also want to “help wean users from screens,” and are wary of tech wearables like smart glasses, The Wall Street Journal reported on Wednesday.

Altman was an investor in startup Humane’s AI pin, a small, voice-controlled device users could wear on their lapel and use for phone calls, texts and search queries. The product was released in 2023 to a poor reception, and discontinued before the company began winding down operations in February.

Ive and Altman could be working on something similar to the AI pin, but slightly larger and worn around users’ necks, Apple analyst Ming-Chi Kuo wrote on social media platform X on Thursday. The product, which would connect with smartphones but have no display — not unlike AirPods, in that way — could begin production in 2027, Kuo predicted.

In the past, Ive has said that he relishes the opportunity to design new types of devices that don’t already exist in the world.

“I love working within such a relatively new product category. The opportunities are remarkable as you can be working on just one product that can instantly shatter an entire history of product types and implicated systems,” Ive told the British Council’s Design Museum in a 2005 interview. He pointed to the iPod as an example of a product that “clearly [turned] our users’ previous experience and understanding of storing and listening to music upside down.”